The detention of Pavel Durov is being portrayed as a result of the EU Digital Services Act. But having spent my day reading the EU Services Act (a task I would not wish upon my worst enemy), it does not appear to me to say what it is being portrayed as saying.

EU Acts are horribly dense and complex, and are published as “Regulations” and “Articles”. Both cover precisely the same ground, but for purposes of enforcement the more detailed “Regulations” are the more important, and those are referred to below. The “Articles” are entirely consistent with this.

So, for example, Regulation 20 makes the “intermediary service”, in this case Telegram, only responsible for illegal activity using its service if it has deliberately collaborated in the illegal activity.

Providing encryption or anonymity specifically does not qualify as deliberate collaboration in illegal activity.

(20) Where a provider of intermediary services deliberately collaborates with a recipient of the services in order to undertake illegal activities, the services should not be deemed to have been provided neutrally and the provider should therefore not be able to benefit from the exemptions from liability provided for in this Regulation. This should be the case, for instance, where the provider offers its service with the main purpose of facilitating illegal activities, for example by making explicit that its purpose is to facilitate illegal activities or that its services are suited for that purpose. The fact alone that a service offers encrypted transmissions or any other system that makes the identification of the user impossible should not in itself qualify as facilitating illegal activities.

And at para 30, there is specifically no general monitoring obligation on the service provider to police the content. In fact it is very strong that Telegram is under no obligation to take proactive measures.

(30) Providers of intermediary services should not be, neither de jure, nor de facto, subject to a monitoring obligation with respect to obligations of a general nature. This does not concern monitoring obligations in a specific case and, in particular, does not affect orders by national authorities in accordance with national legislation, in compliance with Union law, as interpreted by the Court of Justice of the European Union, and in accordance with the conditions established in this Regulation. Nothing in this Regulation should be construed as an imposition of a general monitoring obligation or a general active fact-finding obligation, or as a general obligation for providers to take proactive measures in relation to illegal content.

However, Telegram is obliged to act against specified accounts in relation to an individual order from a national authority concerning specific content. So while it has no general tracking or censorship obligation, it does have to act at the instigation of national authorities over individual content.

(31) Depending on the legal system of each Member State and the field of law at issue, national judicial or administrative authorities, including law enforcement authorities, may order providers of intermediary services to act against one or more specific items of illegal content or to provide certain specific information. The national laws on the basis of which such orders are issued differ considerably and the orders are increasingly addressed in cross-border situations. In order to ensure that those orders can be complied with in an effective and efficient manner, in particular in a cross-border context, so that the public authorities concerned can carry out their tasks and the providers are not subject to any disproportionate burdens, without unduly affecting the rights and legitimate interests of any third parties, it is necessary to set certain conditions that those orders should meet and certain complementary requirements relating to the processing of those orders. Consequently, this Regulation should harmonise only certain specific minimum conditions that such orders should fulfil in order to give rise to the obligation of providers of intermediary services to inform the relevant authorities about the effect given to those orders. Therefore, this Regulation does not provide the legal basis for the issuing of such orders, nor does it regulate their territorial scope or cross-border enforcement.

The national authorities can demand content is removed, but only for “specific items”:

51) Having regard to the need to take due account of the fundamental rights guaranteed under the Charter of all parties concerned, any action taken by a provider of hosting services pursuant to receiving a notice should be strictly targeted, in the sense that it should serve to remove or disable access to the specific items of information considered to constitute illegal content, without unduly affecting the freedom of expression and of information of recipients of the service. Notices should therefore, as a general rule, be directed to the providers of hosting services that can reasonably be expected to have the technical and operational ability to act against such specific items. The providers of hosting services who receive a notice for which they cannot, for technical or operational reasons, remove the specific item of information should inform the person or entity who submitted the notice.

There are extra obligations for Very Large Online Platforms, which have over 45 million users within the EU. These are not extra monitoring obligations on content, but rather extra obligations to ensure safeguards in the design of their systems:

(79) Very large online platforms and very large online search engines can be used in a way that strongly influences safety online, the shaping of public opinion and discourse, as well as online trade. The way they design their services is generally optimised to benefit their often advertising-driven business models and can cause societal concerns. Effective regulation and enforcement is necessary in order to effectively identify and mitigate the risks and the societal and economic harm that may arise. Under this Regulation, providers of very large online platforms and of very large online search engines should therefore assess the systemic risks stemming from the design, functioning and use of their services, as well as from potential misuses by the recipients of the service, and should take appropriate mitigating measures in observance of fundamental rights. In determining the significance of potential negative effects and impacts, providers should consider the severity of the potential impact and the probability of all such systemic risks. For example, they could assess whether the potential negative impact can affect a large number of persons, its potential irreversibility, or how difficult it is to remedy and restore the situation prevailing prior to the potential impact.

(80) Four categories of systemic risks should be assessed in-depth by the providers of very large online platforms and of very large online search engines. A first category concerns the risks associated with the dissemination of illegal content, such as the dissemination of child sexual abuse material or illegal hate speech or other types of misuse of their services for criminal offences, and the conduct of illegal activities, such as the sale of products or services prohibited by Union or national law, including dangerous or counterfeit products, or illegally-traded animals. For example, such dissemination or activities may constitute a significant systemic risk where access to illegal content may spread rapidly and widely through accounts with a particularly wide reach or other means of amplification. Providers of very large online platforms and of very large online search engines should assess the risk of dissemination of illegal content irrespective of whether or not the information is also incompatible with their terms and conditions. This assessment is without prejudice to the personal responsibility of the recipient of the service of very large online platforms or of the owners of websites indexed by very large online search engines for possible illegality of their activity under the applicable law.

(81) A second category concerns the actual or foreseeable impact of the service on the exercise of fundamental rights, as protected by the Charter, including but not limited to human dignity, freedom of expression and of information, including media freedom and pluralism, the right to private life, data protection, the right to non-discrimination, the rights of the child and consumer protection. Such risks may arise, for example, in relation to the design of the algorithmic systems used by the very large online platform or by the very large online search engine or the misuse of their service through the submission of abusive notices or other methods for silencing speech or hampering competition. When assessing risks to the rights of the child, providers of very large online platforms and of very large online search engines should consider for example how easy it is for minors to understand the design and functioning of the service, as well as how minors can be exposed through their service to content that may impair minors’ health, physical, mental and moral development. Such risks may arise, for example, in relation to the design of online interfaces which intentionally or unintentionally exploit the weaknesses and inexperience of minors or which may cause addictive behaviour.

(82) A third category of risks concerns the actual or foreseeable negative effects on democratic processes, civic discourse and electoral processes, as well as public security.

(83) A fourth category of risks stems from similar concerns relating to the design, functioning or use, including through manipulation, of very large online platforms and of very large online search engines with an actual or foreseeable negative effect on the protection of public health, minors and serious negative consequences to a person’s physical and mental well-being, or on gender-based violence. Such risks may also stem from coordinated disinformation campaigns related to public health, or from online interface design that may stimulate behavioural addictions of recipients of the service.

(84) When assessing such systemic risks, providers of very large online platforms and of very large online search engines should focus on the systems or other elements that may contribute to the risks, including all the algorithmic systems that may be relevant…

This is very interesting. I would argue that under Article 81 and 84, for example, the blatant use of both algorithms limiting reach and plain blocking by Twitter and Facebook, to promote a pro-Israeli narrative and to limit pro-Palestinian content, was very plainly a breach of the EU Digital Services Directive by deliberate interference with “freedom of expression and information, including media freedom and pluralism”.

The legislation is very plainly drafted with the specific intent of outlawing the use of algorithms to interfere with freedom of speech and public discourse in this way.

But it is of course a great truth that the honesty and neutrality of prosecution services is much more important to what actually happens in any “justice” system than the actual provisions of legislation.

Only a fool would be surprised that the EU Digital Services Act is being shoehorned into use against Durov, apparently for lack of cooperation with Western intelligence services and being a bit Russian, and is not being used against Musk or Zuckerberg for limiting the reach of pro-Palestinian content.

It is also worth noting that Telegram is not considered to be a very large online platform by the EU Commission who have to date accepted Telegram’s contention that it has less than 45 million users in the EU, so these extra obligations do not apply.

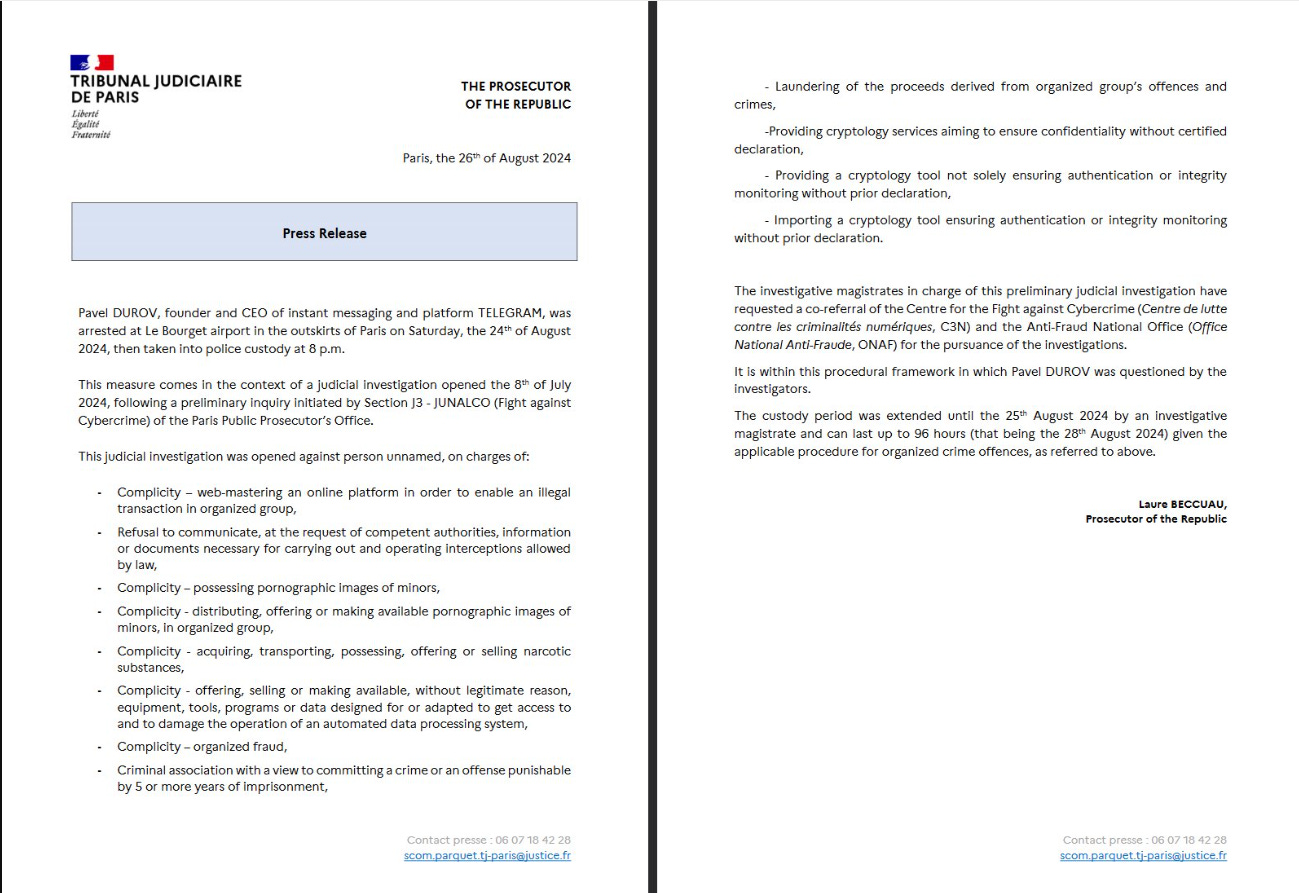

If we look at the charges against Durov in France, I therefore cannot see how they are in fact compatible with the EU Digital Services Act.

Unless he refused to remove or act over specific individual content specified by the French authorities, or unless he set up Telegram with the specific intent of facilitating organised crime, I do not see how Durov is not protected under Articles 20 and 30 and other safeguards found in the Digital Services Act.

The French charges appear however to be extremely general and not to relate to particular specified communications. This is an abuse.

What the Digital Services Act does not contain is a general obligation to hand over unspecified content or encryption keys to police forces or security agencies. It is also remarkably reticent on “misinformation”.

Regulations 82 or 83 above obviously provide some basis for “misinformation” policing, but the Act in general relies on the rather welcome assertion that regulations governing what speech and discourse is legal should be the same offline as online.

So in short, the arrest of Pavel Durov appears to be pretty blatant abuse and only very tenuously connected to the legal basis given as justification. This is simply a part of the current rising wave of authoritarianism in western “democracies”.